An exhaustive study that explores the likelihood of Russia deploying autonomous robots in future conflicts, analyzing Vladimir Putin’s position and comparison with US military strategies.

Exploring Putin’s stance on AI and autonomous weaponry

Russian President Vladimir Putin’s recent pronouncement suggests an ambivalent stance toward restrictions on artificial intelligence (AI) and robotics in the military context. Putin’s comment reveals a tendency to postpone regulation of these technologies until threats materialize. This attitude raises critical uncertainties about the integration of AI autonomy into the Russian military arsenal, as opposed to the more cautious and ethical policies adopted by its rivals, especially the United States.

Military AI can range from basic automation to advanced self-learning systems. While the Pentagon has taken a rigorous approach to ethics and AI combat operations, Russia’s and China’s reluctance to follow strict regulations in this area raises serious concerns. It is notable that the Pentagon maintains the “man in the loop” doctrine for autonomous weapons, requiring human intervention in decisions to use lethal force.

However, the advancement of AI raises questions about its application in non-lethal defensive measures and the willingness of Russia and China to confront armies of AI-armed robots that operate autonomously.

The lack of a global consensus on restrictions on military AI underscores the importance of understanding Russia’s posture to anticipate its role in the changing landscape of AI and military robotics.

Analysis of the integration of AI in Russian military weapons

Russia’s ambitions in military technology are reflected in its initiatives in mechanical engineering, including the production of tanks, robots, lasers and armored vehicles. Despite its traditional reliance on the physical manpower of soldiers, AI represents a significant factor in its military strategy. During a Defense Ministry meeting, Putin acknowledged growing interest in AI but without solidifying a clear ethical approach to its development and application.

Assessing the risks associated with autonomous deployment of AI in the military is crucial for responsible use. In this context, it is notable that the US military already employs AI to generate weapons recommendations during attacks while maintaining restrictions on human intervention ( Human-in-the-loop ). This practice contrasts with Russia’s less clear-cut approach to ethical considerations for military AI applications.

Modern AI integrations in military engineering emphasize the methodical use of force, recommending appropriate weaponry for specific operations. The risks of postponing regulation until tangible threats emerge highlight the need for foresight in the face of rapid AI advancement.

Ethical and strategic implications in autonomous warfare

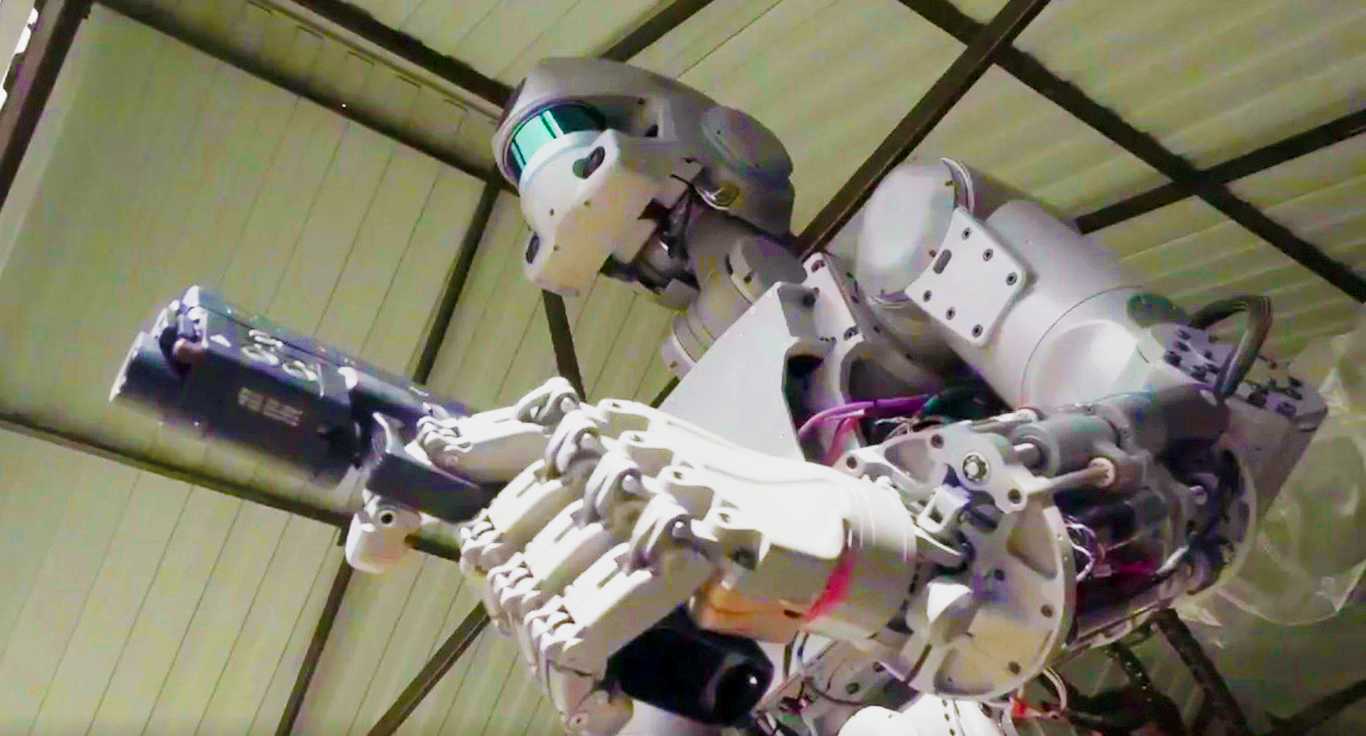

Russia’s Skybot F-850 humanoid robot holds a Russian flag with cosmonaut Alexey Ovchinin for a photo in the Zvezda service module of the International Space Station in this photo released on Sept. (Image credit: Roscosmos via Twitter )

Russia’s Skybot F-850 humanoid robot holds a Russian flag with cosmonaut Alexey Ovchinin for a photo in the Zvezda service module of the International Space Station in this photo released on Sept. (Image credit: Roscosmos via Twitter )Despite claims of “weapons superiority,” both Russia and the United States can simulate war. However, industrial capacity becomes a critical factor when evaluating military technological development. This could hamper Russian advances in next-generation warfighting technology, while the U.S. military has a robust industrial capacity to rebuild armed forces during protracted conflicts.

Ethical assessments are critical to mitigating the unintended consequences of autonomous warfare. They contribute to transparency in developing and deploying autonomous weapons and build public trust. Adopting open ethical practices strengthens the legitimacy of autonomous warfare technologies.

Furthermore, these assessments ensure compliance with international humanitarian law, including the Geneva Conventions, and contribute to long-term strategic stability, avoiding destabilizing arms races in autonomous weapons.